WHY EVEN GOOD MODELS FAIL IN CHAOTIC SYSTEMS (A MARCH MADNESS EXPERIMENT)

Written By: Chet Hayes, Vertosoft CTO

For the last few years, I’ve been treating my March Madness bracket like an engineering problem. Every season, the approach gets more sophisticated: more features, better weights, blended performance metrics, and a shift in modeling architecture.

And every year, I still lose.

For a long time, I thought the failure was technical. I assumed the “answer” was just one more tweak away, that if I could just sharpen the probabilities or refine the variables, the noise would finally settle into a clear signal.

After reflecting on the results of the past few years, I realized that maybe I needed to look at the problem differently.

From Prediction to Description

In theory, throwing more data and compute at a problem should yield clarity. In practice, when dealing with a complex system like a 68-team tournament, it often just gives you a higher-fidelity view of the chaos.

I shifted my focus this year. Instead of trying to force the model to “pick winners,” I used it to understand matchup dynamics and how outcomes compound over six rounds. The goal wasn’t certainty; it was an attempt to map out how the tournament actually behaves.

This approach provided a much more useful set of insights:

- It’s a descriptive tool, not a crystal ball: It doesn’t tell me who will win; it tells me which teams show up in the Final Four across 100,000 simulations.

- Identifying the Drop-offs: It’s great at highlighting the “cliffs”, where a strong team hits a specific style of play they simply aren’t built to handle.

- The 23% Reality Check: My top-rated team only wins the tournament in 23% of the simulations. That means they lose 77% of the time. In any other business context, we wouldn’t call that a “favorite”; we’d call it a high-risk gamble.

This Isn’t Just About Basketball

As I was digging through the simulation data, the parallels to the real world became impossible to ignore. Whether we are forecasting demand, managing risk in complex infrastructure, or deploying AI agents into strategic roles, we fall into the same trap.

We have an expectation that if a model is “good enough,” it should eliminate uncertainty. But in systems where one small variable can change the entire trajectory, that clarity is an illusion.

The best a model can do is define the range of outcomes. It doesn’t remove the risk; it just makes it visible. It provides an honest picture of the “landscape of the unknown,” which is a much better, if less satisfying, place to make a decision from.

The Two-Bracket Strategy

Despite the 100,000 simulations and the refined logic, I’m still submitting two brackets:

- The “Rational” Bracket: Strictly following the probabilities and simulation peaks.

- The “Human” Bracket: Built on gut feelings, questionable upsets, and a few teams I probably trust more than the data suggests I should. (Looking at you BYU)

The model tells me that neither is likely to win. In a truly chaotic system, the person picking based on which mascot would win in a fight has just as good a chance of beating the “perfect” algorithm.

In chaotic systems, the edge doesn’t come from predicting the exact outcome. It comes from understanding the range of outcomes and deciding how to play inside it.

The model can’t win your bracket. But it can tell you how uncertain winning really is.

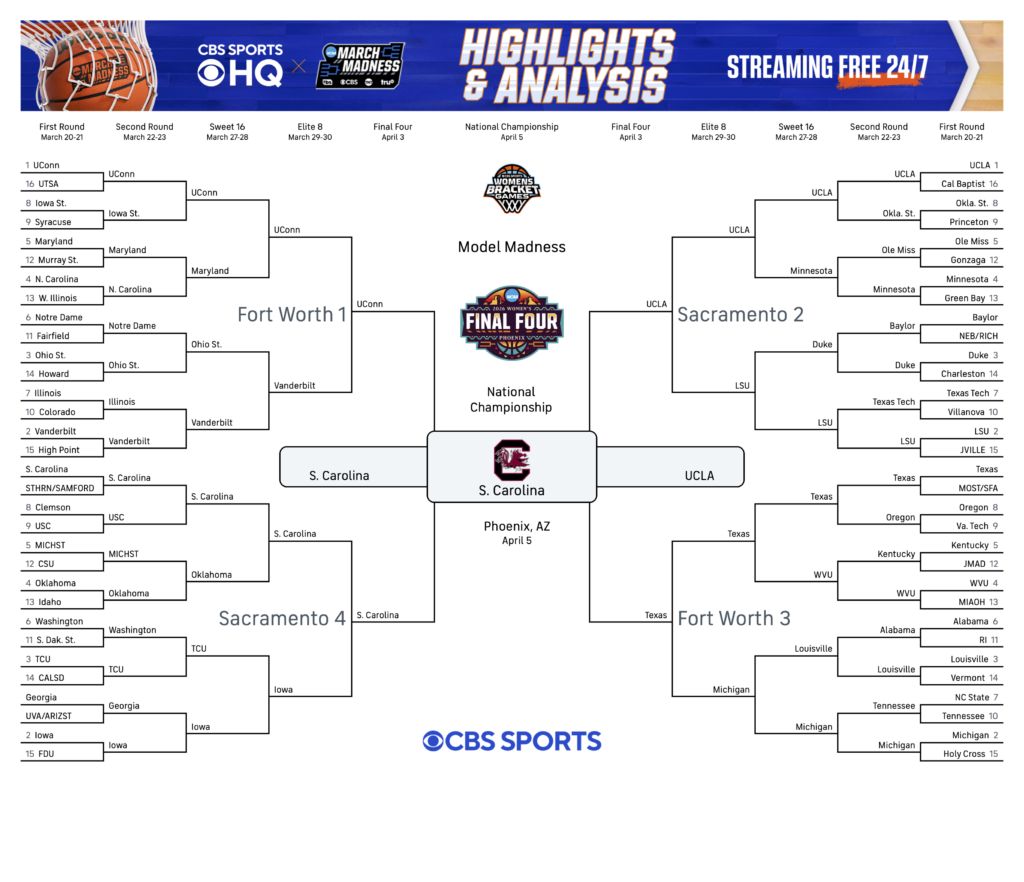

Here are my 2026 Men’s and Women’s March Madness Tournament Brackets: